What Is Natural Language Processing?

Have you ever talked to a phone and it talked back? Or how does your email know which messages are junk? That's a computer trick called Natural Language Processing (NLP).

Think of it like this: NLP is a way to teach computers how to understand and use our words, like when we talk or write. The big idea is to make computers learn our language, so we don't have to learn theirs.

How Computers Learn to Understand Words

Imagine you have a super smart robot. You don't want it to just copy what you say like a parrot. You want it to understand what your words mean. That's what NLP does. It helps computers understand what we are really saying.

This makes using computers feel easy and natural. It's the magic behind your smart speaker playing your favorite song, your phone guessing the next word you want to type, and apps that can translate words into another language.

Breaking It Down

So, how does a computer even start to understand our words? It has a few main jobs to do.

Here’s a quick look at the main jobs of Natural Language Processing.

What NLP Helps Computers Do

| NLP's Job | What It Means in Simple Terms |

|---|---|

| Taking In Words | First, the computer reads the words you type or listens to the words you say. |

| Figuring Out Meaning | Next, it tries to guess what you really mean. This is the tricky part, because words can mean different things. For example, "cool" can mean cold or it can mean something is awesome! |

| Doing Something | Finally, the computer uses what it learned to do something helpful, like answer your question or follow your command. |

These steps turn our normal words into something a computer can work with.

The real magic of NLP isn't just knowing words. It's about understanding why you said them and how you feel. This is what makes talking to a computer feel more like talking to a person.

If you're curious to learn more, this complete guide on Natural Language Processing shows how it all works. Computers used to follow simple rules, but now they are smart enough to learn from tons of information. It's like they have read most of the internet to understand how we talk.

A Quick Trip Through NLP History

To see how our gadgets got so smart, let's look back in time. The story of teaching computers our language didn't start with phones. It started a long, long time ago in the 1950s.

Imagine giant computers that filled a whole room. They could only follow very strict rules. People had to write down every single rule for grammar, like a giant rulebook for words. If a word wasn't in the book, the computer had no idea what to do.

The First Big Challenge

One of the first big tests was to make a computer translate words. In 1954, a computer called the IBM 701 was able to translate 60 sentences from Russian to English. It was a huge deal!

But using a rulebook was hard. Our language is messy and has lots of slang and strange sayings. For example, how do you explain "it's raining cats and dogs" to a computer that only knows rules? You can read more about these early NLP experiments to see how tricky it was.

A famous story says a computer once tried to translate "the spirit is willing, but the flesh is weak." The computer changed it to "the vodka is good, but the meat is rotten." This shows how just following rules without understanding the real meaning can go very wrong!

A Smarter Way to Learn

Then, someone had a better idea. What if we just let computers read a lot? Just like a kid learns to talk by listening, computers could learn by reading millions of books and web pages.

This changed everything. Computers started to see patterns all by themselves. They figured out that the words "king" and "queen" are related, or that "running" is like "jogging," without anyone telling them.

Now, we have computers that work like a brain. They have learned from so much writing on the internet that they can understand not just words, but also feelings and jokes. This long trip—from rulebooks to smart computers—is why your phone can finally understand what you mean.

How Computers Turn Our Words Into Numbers

So, how does a computer really learn our language? It can't read a book like we do. For a computer, everything we say or write has to be turned into the only thing it understands: numbers.

It's like taking apart a LEGO building. A computer doesn't see a whole sentence. It sees a pile of word-bricks that it needs to sort and understand one by one.

The first step is called tokenization. That's a fancy word for chopping up a sentence into its smallest parts, or "tokens." So, a sentence like "I love my new puppy" gets broken into five tokens: I, love, my, new, and puppy.

From Words to Secret Codes

After the sentence is broken up, the computer is still stuck. The words "love" and "puppy" are just letters to a machine. To fix this, every single word gets its own special number code.

This secret code is like giving each word a spot on a giant map. This spot, which is a list of numbers, tells the computer two important things:

- What the word means.

- How it's connected to all the other words.

For example, the number codes for "king" and "queen" will be very close together on the map. The same is true for words like "happy" and "joyful." This is how a computer starts to understand that some words are alike.

This journey from simple rules to deep understanding is a big one. The way computers have gotten smarter over the years is the reason we have such cool tools today.

As you can see, the first computers were stuck with a "rulebook." Today's computers learn more like our brains, which is how they understand something as tricky as language.

Learning from a Giant Library

After turning words into number codes, the computer still needs to learn how words fit together. To do this, it studies from a huge digital library called a language model.

This is not a normal library. A language model is made by a computer reading billions of web pages, articles, and books. By looking at all this information, it learns the patterns of how we talk and write. It figures out that after the words "the dog chased," the next word is probably "the ball," not "the cloud."

A language model isn't really "thinking" like you do. It's just very, very good at guessing the next word based on all the patterns it has seen before.

This whole process—chopping up words, turning them into numbers, and guessing what comes next—is what makes today's language tools work. For example, special speech-to-text software uses these steps to turn your voice into written words super fast.

It listens to your voice, turns the sounds into number codes, and then uses its giant library to guess the right words. It feels like magic, but it’s really just a computer being very clever with numbers.

The Three Big Eras That Shaped NLP

Teaching computers to understand us didn't happen overnight. It happened in three big steps, or eras. Each step built on the last one to give us the smart tools we use today.

It's like going from a robot that can only follow a list of rules to a robot that can learn on its own.

The Rulebook Era

The first era began a long time ago in the 1950s. Back then, people tried to teach computers language by writing down every single grammar rule. It was like giving the computer a giant textbook and telling it to follow the rules exactly.

But this didn't work very well. Our language is full of jokes, slang, and exceptions to the rules. If you said something that wasn't in the rulebook, the computer would get confused.

The Learning From Examples Era

By the 1980s, people tried a new idea. Instead of giving computers a rulebook, they let them learn from real examples. This was a new era where computers started learning by reading millions of real sentences from books and news articles.

This was a huge improvement. Computers started to see patterns on their own. They figured out which words usually go together without anyone telling them. You can explore the full history of these NLP shifts to see how each step made computers smarter.

This was like learning to ride a bike by actually trying it, instead of just reading a book about it. The computer learned from real life, which made it much better at understanding how we really talk.

The Super Brain Era

Now we are in the third and current era: the Deep Learning Era. We have very powerful computers that work like a human brain. These computers have learned from more text than a person could read in a thousand years—almost the whole internet!

Because they have learned from so much information, these computers can do more than just see patterns. They can understand what you really mean, figure out if you're happy or sad from your words, and even write new sentences that sound like a person wrote them. This is the magic behind the smart helpers on our phones and computers today.

Everyday Superpowers You Get from NLP

You probably use NLP all the time without even knowing it. It's the secret helper that makes our gadgets feel so smart and easy to use.

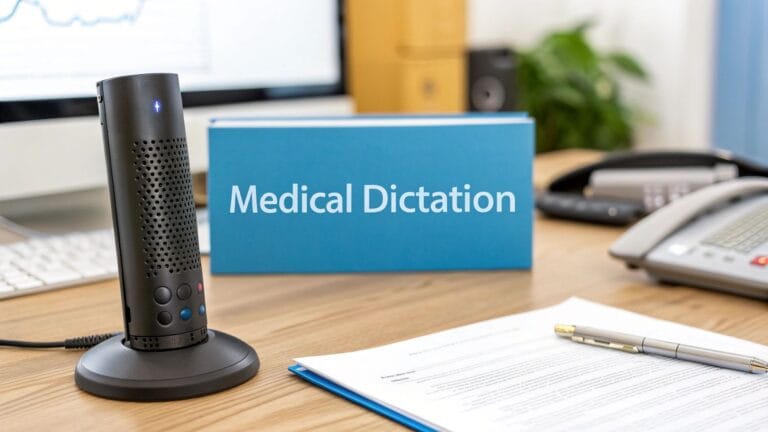

Think about the last time you asked your phone, "What's the weather like?" That's NLP! A superpower called speech recognition listens to your words, understands your question, and finds the answer for you.

Or how about when you visit a website in a different language? Your computer might ask if you want it translated. That's NLP again, changing the words from one language to another in just a second.

Spotting Junk and Saving Time

One of the most helpful jobs NLP does is keeping your email inbox clean. Your email uses text classification to look at new messages and decide if they are junk. This saves you from having to look at a lot of silly ads.

This same idea helps people in important jobs, too. Doctors have a lot of notes to write. Some tools can listen to a doctor's voice and type out all the medical notes perfectly.

This is more than just typing what it hears. The computer knows special medical words and can even pull out the most important points. This gives doctors more time to help their patients instead of doing paperwork.

This shows how NLP can understand words to help with real problems. For example, a doctor might need to turn long notes into a short list for a patient. A tool like a bullet point summarizer uses NLP to find and list the most important information.

Learning what is natural language processing is really about seeing all the smart ways computers help us every day.

Here are some of the amazing jobs NLP does for us.

NLP Superpowers in Action

This table shows a few of these "superpowers" and where you can find them.

| NLP Superpower | What It Does | Where You See It |

|---|---|---|

| Speech Recognition | Turns your spoken words into text a computer can read. | Asking your smart speaker for a song or talking to your phone to send a text. |

| Machine Translation | Quickly changes words from one language to another. | Using an app to read a menu in a different country. |

| Text Classification | Sorts writing into different groups. | Your email sending junk mail to a special folder. |

| Summarization | Finds the most important ideas from a long story. | Getting a short version of a news story so you don't have to read the whole thing. |

From keeping your email clean to helping you talk to people around the world, NLP is a big part of how we use technology today.

Where NLP Shines and Where It Still Struggles

Natural Language Processing is like a very smart helper. It's great at some things, but not so great at others. Knowing what it can and can't do helps us use it better.

NLP is best at finding patterns in a lot of writing—way more than a person could ever read. This makes it a champion at doing the same language job over and over.

For example, it can look through thousands of news articles to find every time a company is mentioned. Or it can read a product review and guess if the person liked the product or not. This helps companies know what their customers are thinking.

It's also great at pulling out specific facts from a messy document. An NLP tool can read a long story and pick out all the names of people, places, and dates. This is super helpful for organizing notes or cleaning up a recorded meeting. You can see how an entity recognition tool does this automatically.

Where It Gets Confused

Even with all its skills, NLP can get confused by the tricky parts of how we talk. It doesn't have memories or feelings, so it doesn't always understand jokes or when we don't mean exactly what we say.

It often gets tripped up by things like:

- Sarcasm: If you write, "Oh great, another chore," the computer might think you're happy about it. It hears the words but misses the feeling behind them.

- Jokes: Jokes are hard for computers. They often use clever word tricks or things about our world that computers just don't know.

- Sayings: If you say, "He spilled the beans," a computer might think you're talking about real beans. It doesn't know this is a saying that means telling a secret.

This shows us something important: NLP is great at understanding the words we use, but it's still learning about the world we live in. It's an expert at finding patterns, not at reading minds.

These are not failures, just reminders of what the technology is. NLP is an amazing helper for working with language, but it doesn't understand things the way a person does.

Common Questions About Natural Language Processing

You might still have some questions about natural language processing. Let's answer some common ones in a simple way.

Is NLP the Same Thing as AI?

Not exactly, but they are part of the same family. Think of Artificial Intelligence (AI) as the big idea of making smart machines. NLP is just one part of AI that is all about understanding language.

So, all NLP is AI, but not all AI is about language. Some AI helps drive cars or play games.

Can NLP Actually Understand My Feelings?

It can make a good guess. A trick called sentiment analysis lets computers look at words and decide if they sound happy, sad, or just okay. It learns that words like "love" and "great" are usually happy, while words like "hate" and "terrible" are usually sad.

But it's important to know that NLP doesn't actually feel anything. It's just very good at matching words to feelings it has seen before. It's more like a detective finding clues in your words than a friend who knows how you feel.

Will Computers Ever Talk Just Like Humans?

We are getting very close, but we're not quite there. Today's computers are great at sounding like real people, but they still miss the little things that make us human, like telling a good joke or understanding sarcasm. These things come from experiences we share, which computers don't have.

This is a big reason why AI generated content still struggles to feel 100% real. The technology is getting better very fast, but for now, nothing beats a real chat with a real person.

Ready to put the power of your own voice to work? With WriteVoice, you can dictate notes, draft emails, and create documents up to four times faster than typing. See how much time you can get back in your day.